Here’s a statement, and you can experience for yourself how you feel about it: the fundamental laws that govern the smallest constituents of matter and energy, when applied to the Universe over long enough cosmic timescales, can explain everything that will ever emerge. This means that the formation of literally everything in our Universe, from atomic nuclei to atoms to simple molecules to complex molecules to life to intelligence to consciousness and beyond, can all be understood as something that emerges directly from the fundamental laws underpinning reality, with no additional laws, forces, or interactions required.

This simple idea — that all phenomena in the Universe are fundamentally physical phenomena — is known as reductionism. In many places, including right here on Big Think, reductionism is treated as though it’s not the taken-for-granted default position about how the Universe works. The alternative proposition is emergence, which states that qualitatively novel properties are found in more complex systems that can never, even in principle, be derived or computed from fundamental laws, principles, and entities.

While it’s true that many phenomena are not obviously emergent from the behavior of their constituent parts, reductionism should be the default position, with anything else being the equivalent of the God-of-the-gaps argument. Here’s why.

The fundamental

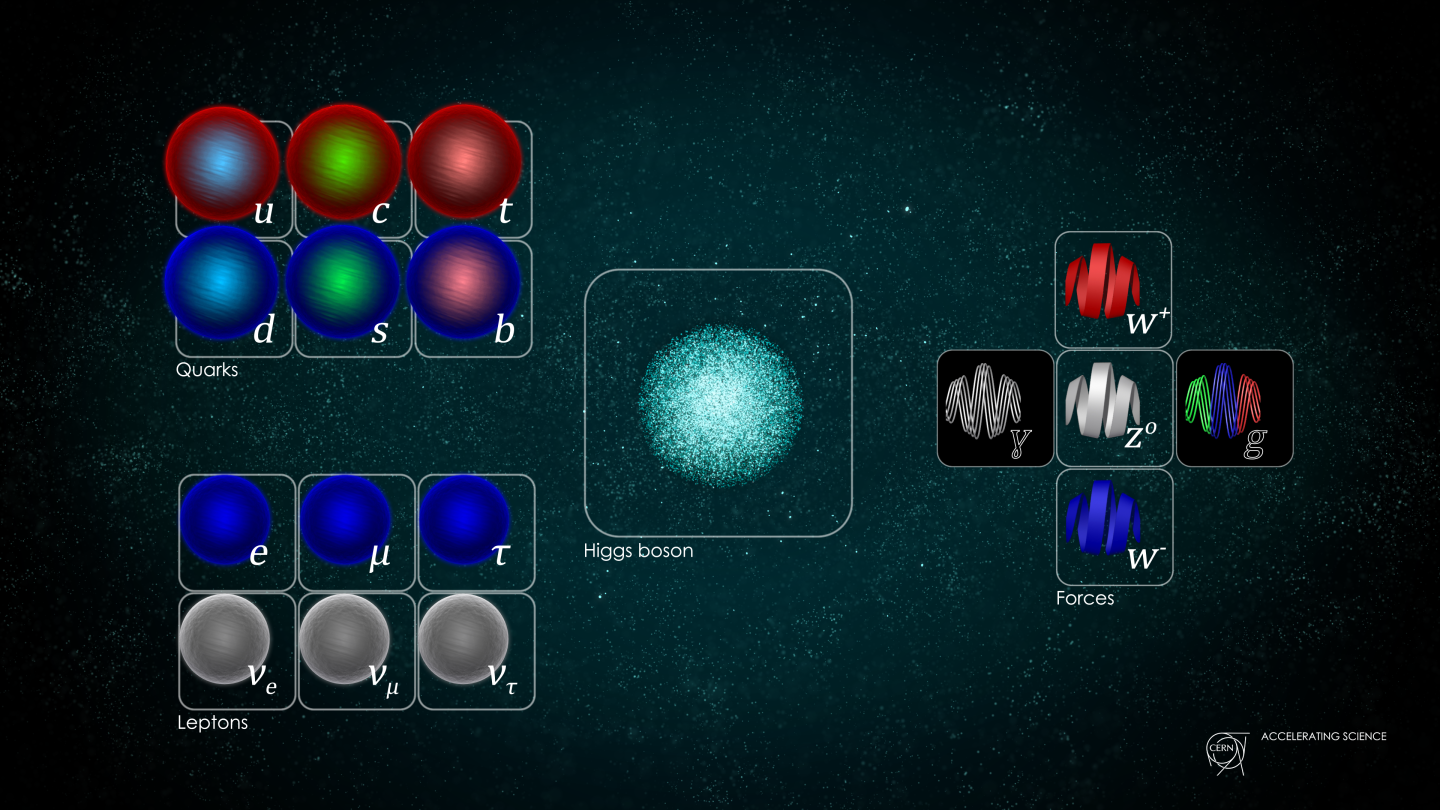

When we think about “what is fundamental” in the Universe, we turn to the most indivisible, elementary entities of all and the laws that govern them. For our physical reality, that means we ought to start with the particles of the Standard Model and the interactions that govern them — as well as whatever dark matter and dark energy are; hitherto their nature is unknown — and to build every phenomenon and complex entity known out of them.

As long as there’s a combination of forces that are relatively attractive at one scale but relatively repulsive at a different scale, we’ll form bound structures out of these fundamental entities. Given that we have four fundamental forces in the Universe, including:

- short-range nuclear forces that come in two types, a strong version and a weak version,

- a long-range electromagnetic force, where “like” charged particles repel and “unlike” charged particles attract,

- and a long-range gravitational force, where the only force between them is always attractive,

we should fully expect that structures will emerge on small, intermediate, and large scales.

Indeed: this is precisely what we get. On the smallest scales, the strong nuclear force binds quarks into bound structures, three-at-a-time, known as baryons. The lightest two baryons are the most stable: the proton, which is 100% stable, and the neutron, which is stable enough to survive with a half-life of about ~15 minutes even when it isn’t bound to anything else.

The strong nuclear force is capable of binding protons and neutrons together into atomic nuclei: even overcoming the repulsive electromagnetic force between like (positive) charges due to having multiple protons in the nucleus. Some nuclei will be stable against decays, others will undergo one or more decays before producing a stable end-product.

And then, the electromagnetic force leverages two facts about the Universe.

- That, overall, it’s electrically neutral, with the same number of negative charges (electrons) as there are positive charges (protons) in existence.

- And that each electron is tiny in mass compared to each proton, neutrons, and atomic nucleus.

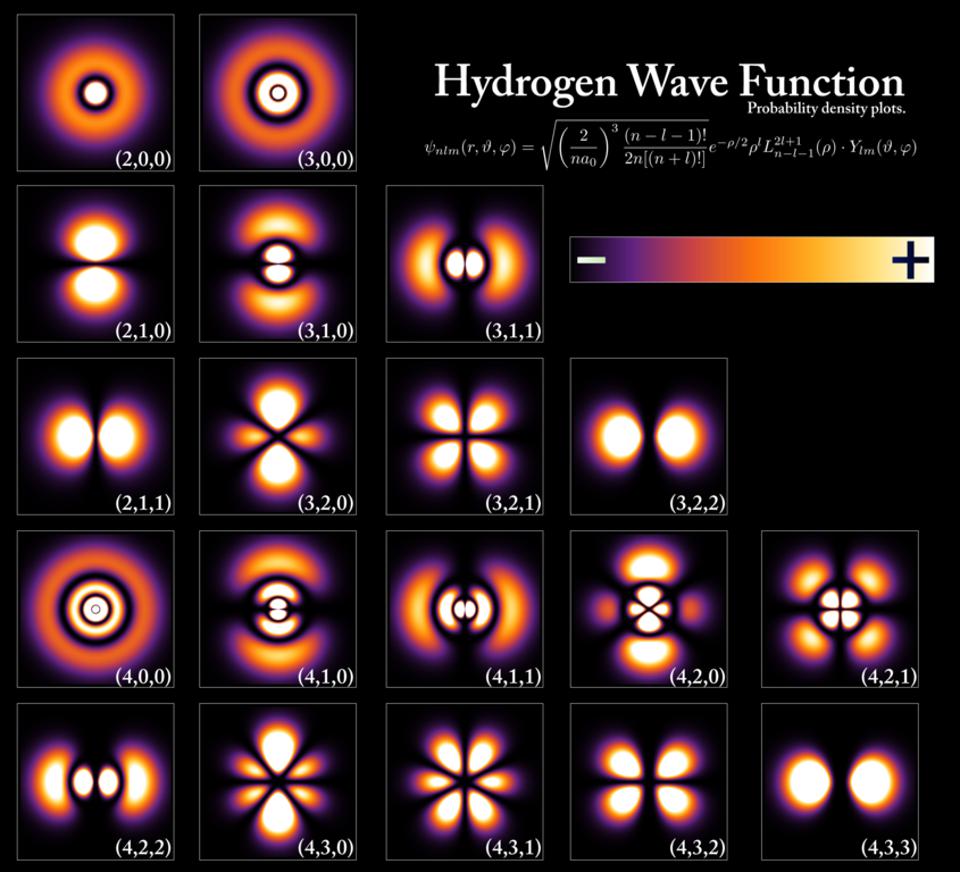

This allows electrons and nuclei to form neutral atoms, where every unique species of atom, depending on the number of protons in its nucleus, has its own unique electron structure, in accordance with the fundamental laws of quantum physics that govern our Universe.

How a reductionist sees the Universe

It’s very important, when we discuss the idea of reductionism, that we don’t “strawman” the reductionist’s position. The reductionist doesn’t say — nor does the reductionist need to assert — that they have an explanation for each and every complex phenomenon that arises in every imaginable complex structure. Some composite structures and some properties of complex structures will be easily explicable from the underlying rules, sure, but the more complex your system becomes, the more difficult you can expect it will be to explain all of the various phenomena and properties that emerge.

That latter piece cannot be considered “evidence against reductionism” in any way, shape, or form. The fact that “There exists this phenomenon that lies beyond my ability to make robust predictions about” is never to be construed as evidence in favor of “This phenomenon requires additional laws, rules, substances, or interactions beyond what’s presently known.”

You either understand your system well-enough to understand what should and shouldn’t emerge from it, in which case you can put reductionism to the test, or you don’t, in which case, you have to go back down to the null hypothesis: that there’s no evidence for anything novel.

And, to be clear, the “null hypothesis” is that the Universe is 100% reductionist. That means a suite of things.

- That all structures that are built out of atoms and their constituents — including molecules, ions, and enzymes — can be described based on the fundamental laws of nature and the component structures that they’re made out of.

- That all larger structures and processes that occur between those structures, including all chemical reactions, don’t require anything more than those fundamental laws and constituents.

- That all biological processes, from biochemistry to molecular biology and beyond, as complex as they might appear to be, are truly just the sum of their parts, even if each “part” of a biological system is remarkably complex.

- And that everything that we regard as “higher functioning,” including the workings of our various cells, organs, and even our brains, doesn’t require anything beyond the known physical constituents and laws of nature to explain.

To date, although it shouldn’t be controversial to make such a statement, there is no evidence for the existence of any phenomena that falls outside of what reductionism is capable of explaining.

How “apparent emergence” is readily explained by reductionism

For some properties inherent to complex systems, it’s pretty easy to explain why they exist as they do. The mass (or weight, if you prefer to use scales) of a macroscopic object is, quite simply, the sum of the masses of the components that make it up, minus the mass lost to the energy binding those components together, via Einstein’s E = mc².

For other properties, it’s not such an easy task, but it has been accomplished. We can explain how thermodynamic quantities like heat, temperature, entropy, and enthalpy emerge from a complex, large-scale ensemble of particles. We can explain the properties of many molecules through the science of quantum chemistry, which can be derived directly from the underlying fundamental laws. We can use those same fundamental laws to understand — although the computing power required is immense — how various molecules, such as peptides and proteins, fold into their equilibrium configurations and also into metastable states.

And then there are properties that we cannot fully explain, but that we also are incapable of making robust predictions for as far as what we expect to see under those conditions. These “hard problems” often include systems that are far too complex to model with current technology, such as human consciousness.

In other words, what appears to be emergent to us today, with our present limitations of what its within our power to compute, may someday in the future be describable in purely reductionist terms. Many such systems that were once incapable of being described via reductionism have, with superior models (as far as what we choose to pay attention to) and the advent of improved computing power, now been successfully described in precisely a reductionist fashion. Many seemingly chaotic systems can, in fact, be predicted to whatever accuracy we arbitrarily choose, so long as enough computational resources are available.

Yes, we can’t rule out non-reductionism, but wherever we’ve been able to make robust predictions for what the fundamental laws of nature do imply for large-scale, complex structures, they’ve been in agreement with what we’ve been able to observe and measure. The combination of the known particles that make up the Universe and the four fundamental forces through which they interact has been sufficient to explain, from atomic to stellar scales and beyond, everything we’ve ever encountered in this Universe. The existence of systems that are too complex to predict with current technology is not an argument against reductionism.

The God-of-the-gaps nature of non-reductionism

But it is true that resorting to non-reductionism — or the notion that completely novel properties will emerge within a complex system that cannot be derived from the interactions of its constituent parts — is tantamount, at this point in time, to a God-of-the-gaps argument. It basically says, “Well, we know how things behave on a certain scale or at a certain time, and we know how they behaved on a smaller scale or at an earlier time, but we can’t fill in all the steps to get from that small scale/early time to understand how the large scale/later time behavior comes about, and therefore, I’m going to insert the possibility that something magical, divine, or otherwise non-physical comes into play.”

Although this is an assertion that is difficult to disprove, it’s one that has not only zero, but negative scientific value. The whole process of science involves investigating the Universe with the tools we have at our disposal for investigating reality, and determining the best physical model, description, and set of conditions that describes that reality. What a fool’s errand it is to assert, “Maybe we need more than our current best model to describe reality” when:

- we don’t even have the computational or modeling power necessary to put our current model to the test,

- and where these are the regimes most likely — if you insert something magical, divine, or non-physical — where science is very likely, in the very near future, to show that such an intervention is wholly unnecessary.

If you either believe or simply want to believe that there’s more to the Universe than the sum of its physical parts, that’s a statement that science is completely agnostic about. However, if you want to believe that a description of the physical phenomena that exist in this Universe require either:

- something more than the physical laws that govern the Universe,

- and/or something other than the physical objects that exist in in the Universe,

perhaps the least successful decision you can make is to put those “metaphysical” entities in a place where science, once it advances just a little bit further, can disprove the need for them entirely.

I have never understood why one would be so willing to assert the existence of the divine or supernatural in a place where it would be so easy to falsify the need for it. Why would you believe, in a Universe that’s so vast, that something beyond the capability of our physical laws to describe would primarily appear in such an extraneous, unnecessary place? If the Universe, as we observe and measure it, isn’t able to be described by what’s physically present within it under the known laws of reality, shouldn’t we determine that to actually be the case before resorting for non-scientific, supernatural explanations?

Final thoughts

The fundamental components of our physical Universe, along with the fundamental laws that govern all of existence, represent the most successful scientific picture of the Universe in all of history. Never before, from the tiniest subatomic particles to macroscopic phenomena to cosmic scales, have we ever had such a successful way of describing our physical reality as we do today. The idea of reductionism is simple: that physical phenomena can be explained by the complex combination of the objects that exist within the Universe, governed by the same physical laws that govern all physical systems within the Universe.

That’s our default starting point: the “null hypothesis” for what reality is.

If that’s not your starting point, it’s my duty to inform you that the burden of proof lies with you. You must show that the null hypothesis is insufficient to describe a phenomenon where its predictions are clear, and in conflict with what can be observed and/or measured. This is a very high bar to clear, and an endeavor that no opponent of reductionism has ever succeeded at. We may not understand everything there is to know about all complex phenomena — and the more complex it is, the harder of a task it is to derive all of its properties from the fundamental — but that’s not the same as having evidence that something more is required.

In science, however, we don’t simply say, “This problem is hard, so maybe the answer lies beyond science?” The only way we ever move forward is by conducting more and better science, relentlessly, until we figure out how it all works.