Multiple teams of scientists can’t agree on how fast the Universe expands. Dark matter may unlock why.

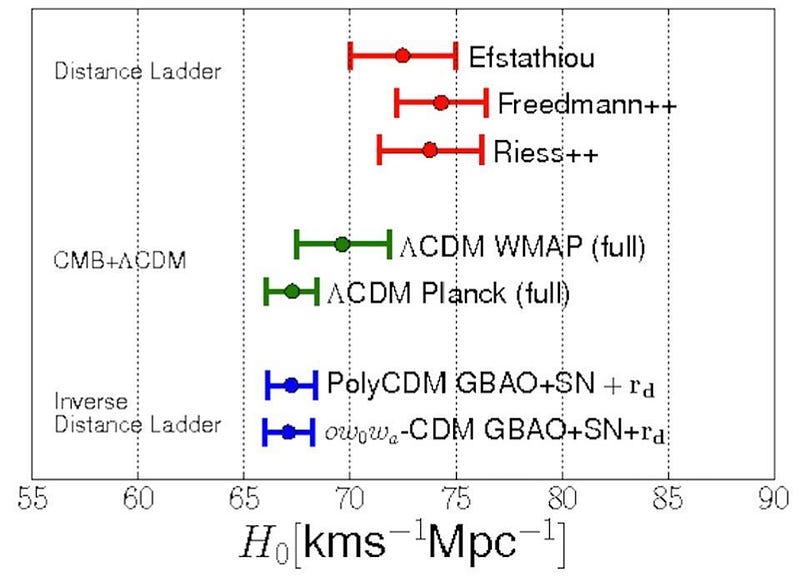

There’s an enormous controversy in astrophysics today over how quickly the Universe is expanding. One camp of scientists, the same camp that won the Nobel Prize for discovering dark energy, measured the expansion rate to be 73 km/s/Mpc, with an uncertainty of only 2.4%. But a second method, based on the leftover relics from the Big Bang, reveals an answer that’s incompatibly lower at 67 km/s/Mpc, with an uncertainty of only 1%. It’s possible that one of the teams has an unidentified error that’s causing this discrepancy, but independent checks have failed to show any cracks in either analysis. Instead, new physics might be the culprit. If so, we just might have our first real clue to how dark matter might be detected.

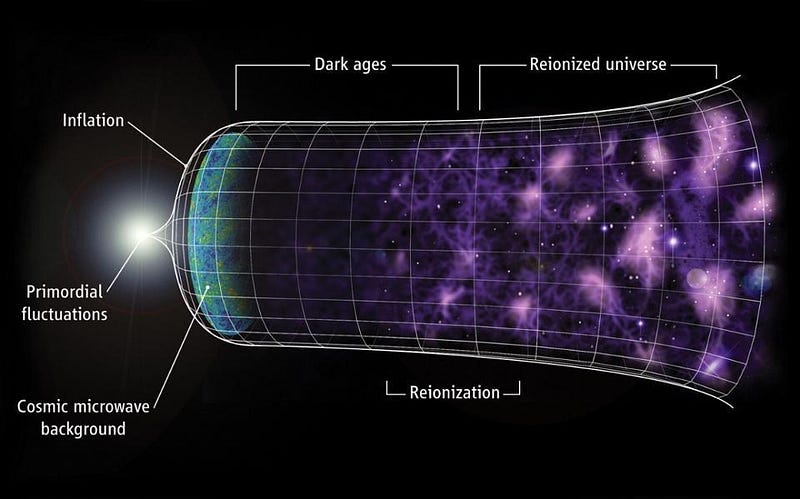

The expanding Universe has been one of the most important discoveries of the past 100 years, and it’s brought with it a revolution in how we conceive of the Universe. It was the key observation that led to the formulation of the Big Bang; it allowed us to discover how stars and galaxies came to exist; it taught us the age of the Universe. Most recently, it led to the discovery of the accelerating Universe, whose cause we commonly call “dark energy.”

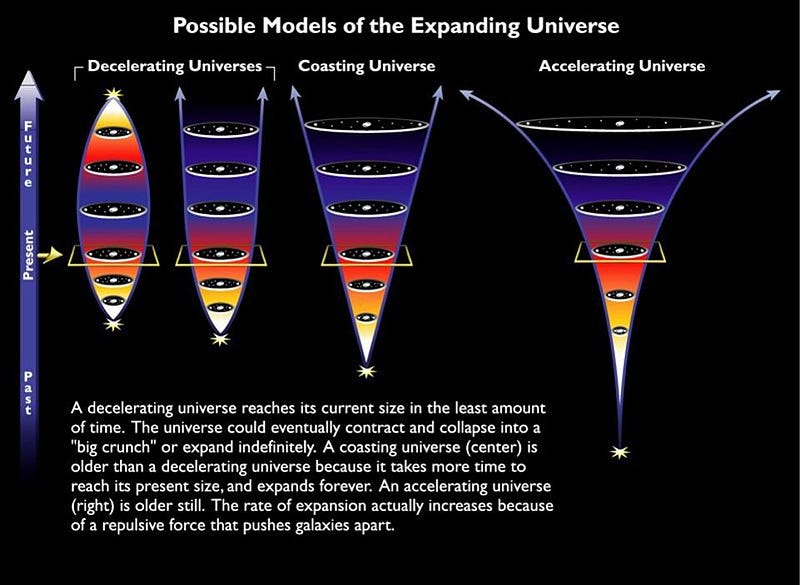

It’s now 20 years since dark energy was first uncovered, though, and we still only have three main classes of possibilities for why the Universe appears to accelerate:

- Vacuum energy, like a cosmological constant, is energy inherent to space itself, and drives the Universe’s expansion.

- Dynamical dark energy, driven by some kind of field that changes over time, could lead to differences in the Universe’s expansion rate depending on when/how you measure it.

- General Relativity could be wrong, and a modification to gravity might explain what appears to us as an apparent acceleration.

The evidence, from everything we’ve gathered, strongly points to that first case, where dark energy is a cosmological constant.

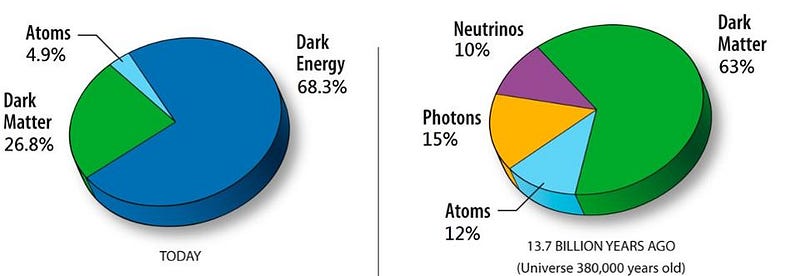

At the dawn of 2018, however, the controversy over the expanding Universe might threaten that picture. Our Universe, made up of 68% dark energy, 27% dark matter, and just 5% of all the “normal” stuff (including stars, planets, gas, dust, plasma, black holes, etc.), should be expanding at the same rate regardless of the method you use to measure it. At least, that would be the case if dark energy were truly a cosmological constant, and if dark matter were truly cold and collisionless, interacting only gravitationally. If everyone measured the same rate for the expanding Universe, there would be nothing to challenge this picture, known as standard (or “vanilla”) ΛCDM.

But everyone doesn’t measure the same rate.

The standard (and oldest) method of measuring the Hubble rate is through a method known as the cosmic distance ladder. Today, the simplest version only has three rungs. First, you measure the distances to nearby stars directly, through parallax, and specifically you measure the distance to the long-period Cepheid stars like this. Second, you then measure other properties of those same types of Cepheid stars in nearby galaxies, learning how far away those galaxies are. And lastly, in some of those galaxies, you’ll have a specific class of supernovae known as Type Ia supernovae, which you can then observe both nearby as well as many of billions of light years away. With just three steps, you can measure the expanding Universe, arriving at a result of 73.24 ± 1.74 km/s/Mpc.

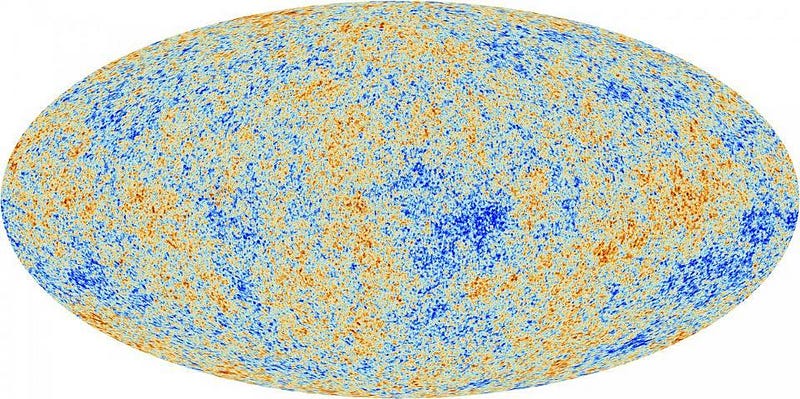

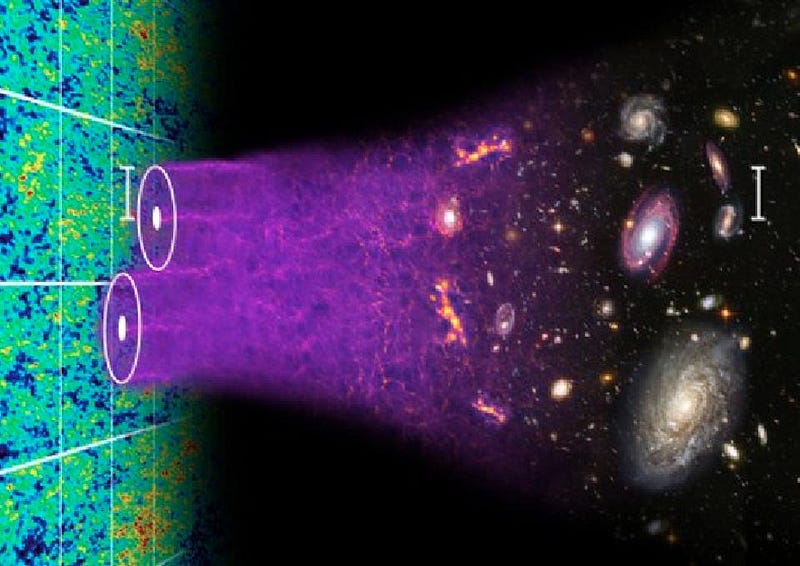

But if you look at the early Universe, before there were stars and galaxies, all you had was the ionized plasma of normal matter, the hot mix of neutrinos and photons which act as radiation, and the cold, slow-moving mass of dark matter. Based on the physics of gravitation, trying to pull the matter together, and radiation, which smooths out overdense regions, we should get a specific pattern of density and temperature fluctuations. This not only shows up in the Cosmic Microwave Background, which is the Big Bang’s leftover glow, but also sets a distance scale for galaxy correlations. These methods of measuring the Hubble rate give a vastly different result: 66.9 ± 0.6 km/s/Mpc.

Many new physics explanations have been floated to attempt to explain this, yet all have run into tremendous difficulties.

- Dark energy might not be a cosmological constant, with a specific balance between outward (accelerating) pressure and inward (gravitating) energy density, but might have a different balance.

- Dark energy could change over time, where it was stronger (or weaker) in the past. This would represent a change in the dark energy equation-of-state over time.

- There could be a contribution of spatial curvature, which represents an additional component affecting the Universe’s expansion rate at various scales.

- There could be an extra species of radiation (or neutrino) in the early Universe, which would alter the pattern of density-and-temperature fluctuations that we see.

- Or we could add in a new type of interaction, either between dark matter and radiation, or by mixing in a new type of “dark radiation” into the Universe, to change the physics of the early Universe.

That last possibility doesn’t have the problem of the other suggestions, which are all tightly constrained by a variety of observations. Because we know so little about dark matter, and yet because dark matter is so important to the formation of large-scale structure in our Universe, any interaction that affects it could affect the density fluctuations that we see. This could impact both the scale of the Cosmic Microwave Background and also of the galaxies that form much later.

If either photons, neutrinos, or some new type of dark radiation (that interacts with dark matter but not any of the normal particles) has a non-zero cross-section with dark matter, it could bias measurements of the Hubble rate to an artificially low value, but only for one type of measurement: the kind that you get from measuring these leftover relics. If interactions between dark matter and radiation are real, they might not only explain this cosmic controversy, but could be our first hint of how dark matter might directly interact with other particles. If we’re lucky, it could even give us a clue to how to finally see dark matter directly.

Currently, the fact that distance ladder measurements say the Universe expands 9% faster than the leftover relic method is one of the greatest puzzles in modern cosmology. Whether that’s because there’s a systematic error in one of the two methods used to measure the expansion rate or because there’s new physics afoot is still undetermined, but it’s vital to remain open-minded to both possibilities. As improvements are made to parallax data, as more Cepheids are found, and as we come to better understand the rungs of the distance ladder, it becomes harder and harder to justify blaming systematics. The resolution to this paradox may be new physics, after all. And if it is, it just might teach us something about the dark side of the Universe.

Ethan Siegel is the author of Beyond the Galaxy and Treknology. You can pre-order his third book, currently in development: the Encyclopaedia Cosmologica.